The paper gave Mistral one line.

“The Paris-based startup’s models demonstrate that a European company can compete at the frontier.” That was the full treatment. A sentence in a footnote in the software stack chapter, between Meta’s Llama and Microsoft’s Phi series.

It should have been a chapter.

Because the case for Mistral is not just about one company’s benchmark scores. It is about what open weights actually mean for European organisations that need to run AI legally, locally, and on infrastructure they can audit. And it is about a question the paper left partly open: can a European AI lab remain European?

That question is worth asking carefully. The answer is not yet settled.

The Weight Argument

Open weights change the compliance calculation in a way that no closed model can match — not contractually, not architecturally, not legally.

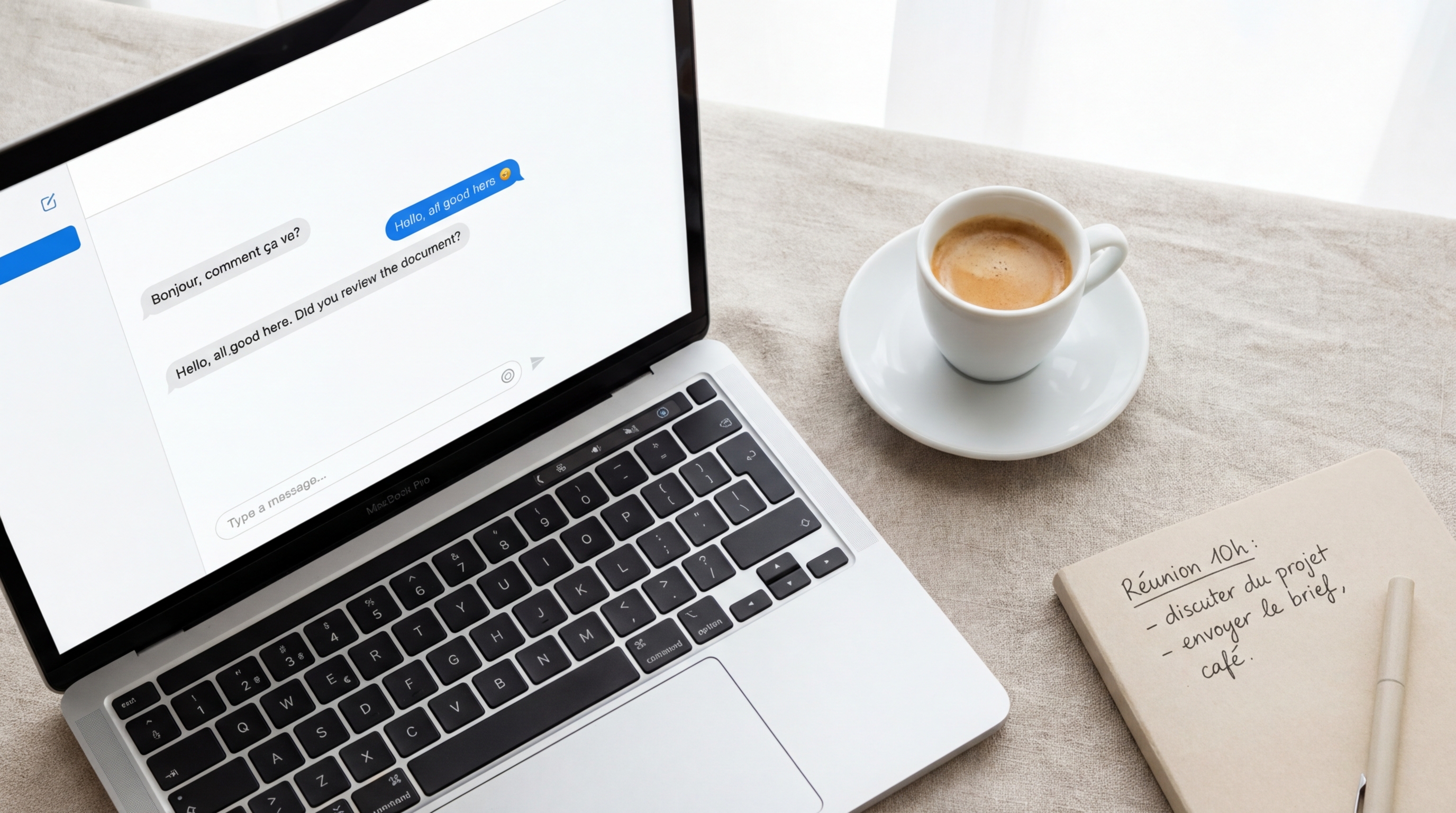

When an organisation deploys a closed-source model — GPT-4o, Gemini, Claude — it is not running AI. It is using a remote AI service, operated by a US company under US law, with inference happening on infrastructure outside the organisation’s control. Every prompt submitted is processed by a foreign entity. Model behaviour is governed by the provider’s terms of service. Compliance audits cannot verify what happens inside the model because the model is not accessible.

For a Belgian hospital running a patient triage assistant. For a youth care organisation processing records covered by GDPR Article 9. For a regional accounting firm handling client tax data under DORA. Across every regulated-sector deployer the paper identified — “I trust the provider” is not a legal position. It is a liability transfer that the regulator does not recognise as compliance.

Open weights dissolve this problem architecturally. The model lives on the organisation’s own infrastructure. The weights are inspectable. Inference is local. No data leaves. No US jurisdiction applies at the point of inference. A data protection impact assessment has something concrete to assess — not a contract, but a model file running on a server in Ghent.

The paper projected that open-weight model quality converging with proprietary models for domain-specific tasks was one of three compounding drivers that would push European local inference to 70–90% by 2028–2030. It is worth stating clearly what that convergence actually means in practice: it means the compliance path is no longer a compromise path. Organisations in regulated sectors do not have to choose between a good model and a compliant one. That choice has closed.

Mistral is why.

The Paris Proof

What Mistral has actually built is easy to understate if you only track the company through its funding rounds.

Mistral 7B was a market signal when it appeared in 2023: a European lab had produced a model that outperformed significantly larger US models on standard benchmarks, openly licensed, at a parameter count that runs on a workstation GPU. Mixtral 8x7B — a sparse mixture-of-experts architecture that routes inputs through specialised expert networks rather than activating the full model — posted performance competitive with GPT-3.5 at a fraction of the compute cost. Mistral Large 2 placed the Paris lab in genuine frontier territory.

Le Chat is now a real consumer product with millions of users. Its market positioning is explicit: a European alternative to ChatGPT with data governance that a European organisation can actually verify. France’s public administration has engaged with Mistral specifically because of this — public institutions need AI they can cite in a data protection impact assessment without relying on a contractual promise from a provider operating under foreign law.

This is not parity on showcase benchmarks. In domain-specific contexts — structured document analysis, legal reasoning, code generation, multilingual European text — Mistral’s open models either match or closely approach the closed frontier. For the specific tasks that regulated European organisations actually perform, the capability gap has closed.

The paper was right to call this convergence. It was wrong to treat it as future-tense.

Counter-Move Five

Here is where the story becomes more complicated.

The paper named five Big Tech counter-moves designed to slow the structural shift to local AI. The fifth was acquisition: “acquiring promising European local AI companies before they become competitors. The pattern is well-established: Google’s acquisition of DeepMind (London), Microsoft’s investment in Mistral AI (Paris), and ongoing acquisition interest in edge AI startups.”

Mistral was already in the paper — not only as a European success story, but as a named example of the acquisition counter-move.

Microsoft’s investment into Mistral in 2024 was structured as a distribution deal: Microsoft would offer Mistral models through Azure, Mistral would gain cloud infrastructure access and enterprise reach. The European Commission monitored it under merger regulations — which was itself a signal that Brussels understood the strategic dimension — but did not block it.

The tension is structural and it does not resolve cleanly. Mistral needs capital to train frontier-class models and sustain a consumer product at scale. Capital at this level comes with board relationships, preferred distribution channels, and commercial dependencies. The dependencies are precisely what the sovereignty argument says European organisations should be moving away from.

Mistral’s leadership has been consistent in its public position: the open weights are non-negotiable. The frontier models are released under permissive licences — Apache 2.0 and equivalents — meaning that even in a scenario where Mistral’s ownership structure changed, the weights already released would remain in European hands, in European data infrastructure, inspectable by European auditors.

That matters. But the pressure to close — to build a proprietary moat, to follow OpenAI into sealed enterprise models, to capture the revenue that closed frontier products generate — is real and ongoing. Every major AI lab that started open has faced this inflection point. Most did not remain open past it.

The story is not over.

The Training Gap

The paper identified an honest limitation: “Europe controls inference but does not control the training pipeline.” Virtually all significant open-weight models in 2026 were trained on centralized US infrastructure. Mitigation pathways listed were federated learning, European HPC clusters (JUPITER, LUMI, MareNostrum 5), and European-origin labs.

Mistral is one of the few organisations on this list that operates commercially at frontier scale. GENCI and the French national computing infrastructure have supported Mistral’s training runs. LUMI — the Finnish-hosted European supercomputer — has been used by European AI labs for exactly this purpose. JUPITER, commissioned for 2024–2025, adds another tier of European training capacity.

This is not yet sufficient to close the gap entirely. Training at the scale needed for frontier-class general models still requires compute that European public infrastructure is only now approaching. But two things are changing simultaneously: European compute capacity is growing, and Mistral is already in frontier territory on less of it — which means the training gap closes from both ends.

The IPCEI-AI programme — the funding vehicle The Sovereign Factory article identified for European AI infrastructure investment — includes training capacity as an explicit objective. If that funding reaches Mistral-class European labs at scale, and if those labs remain open-weight-committed, the scenario the paper was optimistic about becomes structurally achievable: a European AI model stack, trained on European infrastructure, running on European hardware, auditable end to end.

The sovereign factory produces the compute. Mistral is the model. Axelera is the chip. The stack is assembling.

What the Scorecard Says

The paper built its 70–90% local inference projection on three compounding drivers. Regulatory compliance pressure. NPU hardware reaching 50–85 TOPS in business laptops by 2027. And open-weight model quality converging with proprietary models for domain-specific tasks.

The paper said explicitly: if any one driver stalls — if open-weight quality plateaus — the lower bound becomes more likely.

It has not plateaued. The European regulated-sector organisations the paper identified as the primary return cohort — healthcare, finance, legal, public administration — can now run models that are both locally deployable and domain-competitive. The choice between compliance and capability has closed. That was the assumption the entire projection rested on. It has been confirmed in production, not in a research lab.

Scorecard update: 11 of 30 predictions assessed. 11 in the right direction. The open-weight convergence driver — one of the three foundations of the full projection — moves from “expected” to “confirmed.”

The remaining open question is not whether Mistral can compete. That is settled. The question is who it competes for — and whether the weights that make European compliance possible remain freely available when the acquisition pressure reaches its next inflection point.

Europe’s best answer to that question is not to trust Mistral’s leadership. It is to build the regulatory and funding environment that makes staying open the rational choice. The statute book that The Conscience Clause called the right protection for AI ethics applies here too. If open weights are a compliance requirement for Article 9 regulated sectors, Europe should say so — in law, not in contract.