For the past fifteen years, the default assumption in AI architecture has been simple: serious AI lives in the cloud.

Training happens in hyperscale datacenters. Inference is served from centralized APIs. Devices are clients. The cloud is the brain.

That assumption is about to invert.

Not because of hype. Not because of edge-computing fashion. And not because the cloud is failing.

But because four forces are converging in Europe at the same time: geopolitics, environmental limits, hardware evolution, and — most importantly — the EU AI Act.

Together, they create a structural push toward a new default:

From cloud-first to local-first, cloud-extended.

This is not a prediction about technology trends. It is a consequence of regulation, physics, and silicon.

1. Geopolitics: Digital sovereignty is no longer abstract

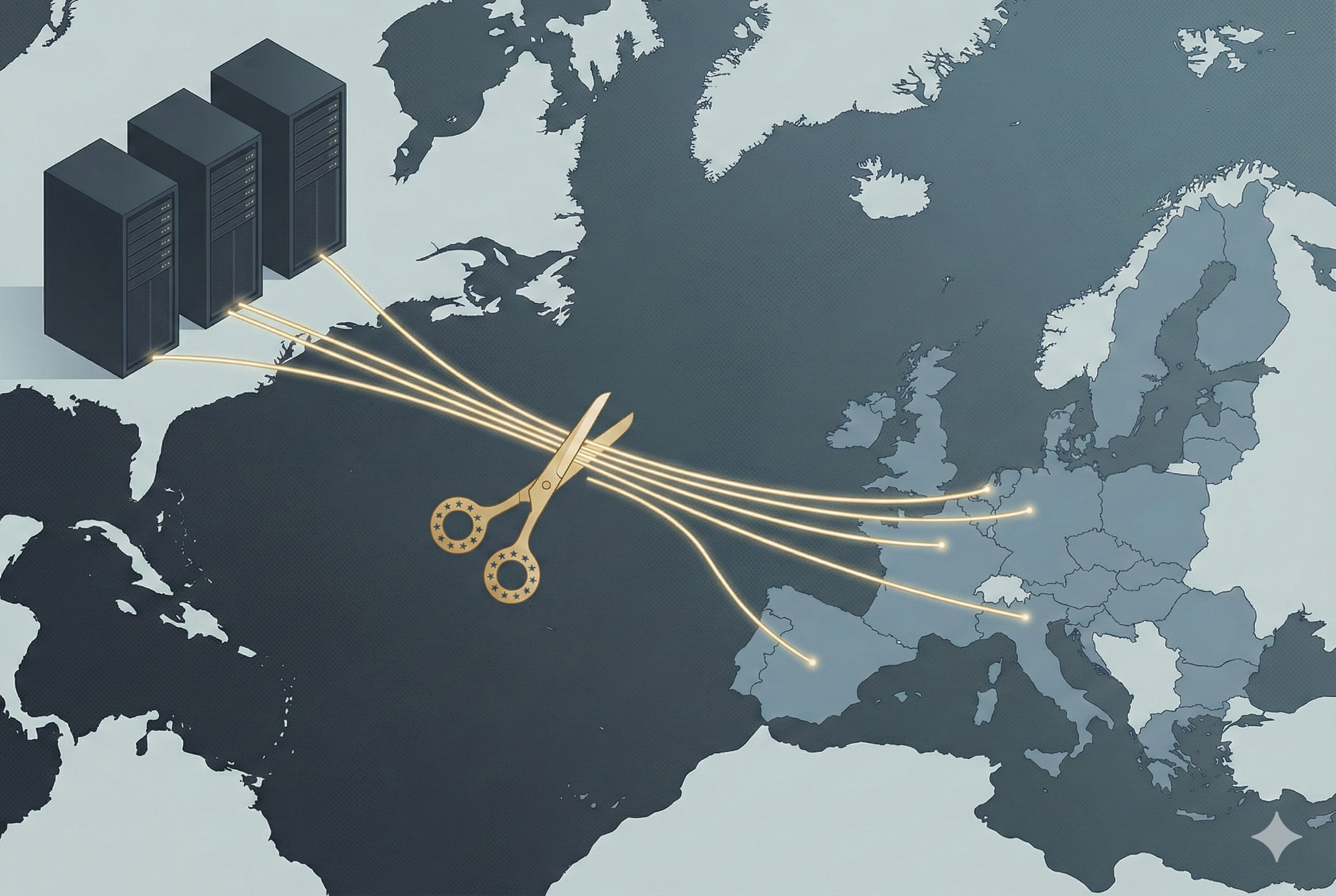

Europe has learned a hard lesson over the last decade: critical digital infrastructure is concentrated outside its borders.

Most AI infrastructure today depends on three US hyperscalers that control approximately 67% of the global cloud market. The US CLOUD Act allows American authorities to compel access to data regardless of where it is stored. The Schrems II ruling has destabilized the legal basis for transatlantic data flows. Export controls have shown how fragile these dependencies can be.

When governments, healthcare providers, and public institutions deploy AI on foreign cloud infrastructure, this is no longer just an IT choice. It is a sovereignty risk.

Running AI locally — on European hardware, on controlled infrastructure — drastically reduces that dependency at the architectural level.

2. Environment: The energy and water cost of centralized AI

Hyperscale AI is resource-intensive. Large datacenters require enormous amounts of electricity and water for cooling. As generative AI usage grows, so does inference traffic.

Inference, not training, becomes the dominant cost at scale.

Europe is already facing grid congestion, water scarcity, and strict sustainability goals. Scaling AI purely through centralized datacenters runs directly into physical limits.

But modern devices — laptops, workstations, embedded systems — are becoming remarkably efficient at AI inference. Running inference locally can be an order of magnitude more energy-efficient than routing every request through a remote datacenter.

At scale, local inference is not just cheaper. It is environmentally necessary.

3. Hardware: Devices are no longer "dumb terminals"

Until recently, local AI was unrealistic. Devices simply lacked the compute.

That is changing fast.

New generations of chips from AMD (55 TOPS), Qualcomm (80–85 TOPS), and Intel (48 TOPS) now include dedicated NPUs (Neural Processing Units) — purpose-built for AI inference at very low power. Europe's own Axelera AI delivers up to 629 TOPS. SiPearl, Kalray, and Semidynamics are building sovereign European silicon.

The model layer is also taking shape in Europe. Mistral AI (Paris) produces open-weight models that run locally and reach frontier-class performance on domain-specific tasks — making them the compliant-by-default choice for regulated-sector organisations that cannot send data to external APIs. Why open weights are the compliance path →

A modern workstation can now run multi-billion parameter models locally that, three years ago, required cloud servers.

This hardware shift is not theoretical. It is already in consumers' hands.

The capability for local AI is arriving exactly when regulation and environmental constraints make it desirable.

4. The EU AI Act: The real turning point

The EU AI Act (Regulation (EU) 2024/1689) introduces strict requirements for AI systems, especially those in high-risk contexts: healthcare, education, HR, and public services. Non-compliance carries fines up to €35 million or 7% of worldwide annual turnover.

These requirements include:

- Data governance and traceability (Art. 10)

- Transparency and documentation (Art. 13)

- Control over data flows

- Clear accountability for system behavior

For organizations, this creates a practical problem: it is far easier to comply with these requirements if sensitive data never leaves the device or local infrastructure.

If inference happens locally:

- No personal data needs to be sent to external APIs

- Data residency is easier to guarantee

- Compliance and auditing become simpler

- Risk surfaces shrink dramatically

Suddenly, local AI is not just technically possible and environmentally desirable — it becomes legally attractive. For many organizations, it will be the path of least resistance.

What this means in practice

This does not mean the cloud disappears.

Training large foundation models will still happen in datacenters. Cloud services will still exist. But the role of the cloud shifts.

Instead of:

Cloud as the default runtime for AI

We move to:

Devices and local infrastructure as the default runtime, with the cloud as extension.

Cloud becomes: model distribution, updates, heavy training, coordination. Not the place where every user interaction must be processed.

A structural shift, not a trend

Individually, none of these forces would be enough.

But together, they create a structural shift that is particularly strong in Europe:

- Geopolitical pressure to reduce dependency

- Environmental pressure to reduce centralized load

- Hardware that suddenly makes local inference viable

- Regulation that makes local processing legally attractive

This combination makes it highly likely that, over the coming years, a large share of AI inference in European organizations will move from cloud APIs to local execution on devices and controlled infrastructure.

Not because it is fashionable. But because it is the most practical, compliant, and efficient option.

Why this matters now

The EU AI Act enforcement is beginning. Organizations are starting to translate legal texts into architectural decisions.

Those decisions will shape AI infrastructure for the next decade.

Understanding that the default is inverting — from cloud-first to local-first, cloud-extended — helps policymakers, technologists, and organizations anticipate what is coming instead of reacting to it later.

The shift to local AI is not a niche technical trend.

It is a predictable outcome of how Europe is choosing to regulate, power, and govern AI.

This article summarizes the key arguments from The Great Return: Why 2026 Marks the Tipping Point for Local AI Migration in Europe — a 12,000-word research paper covering the geopolitical, regulatory, hardware, and environmental forces driving this structural shift. The full paper is available on Zenodo.