Yesterday’s Parliament vote fixed hard dates for the AI Act. Update #7 covered the headline: 2 December 2027 is now a real deadline, not a planning horizon. The fourth structural force moved from theoretical to active.

What Update #7 did not cover was what else was in the same vote.

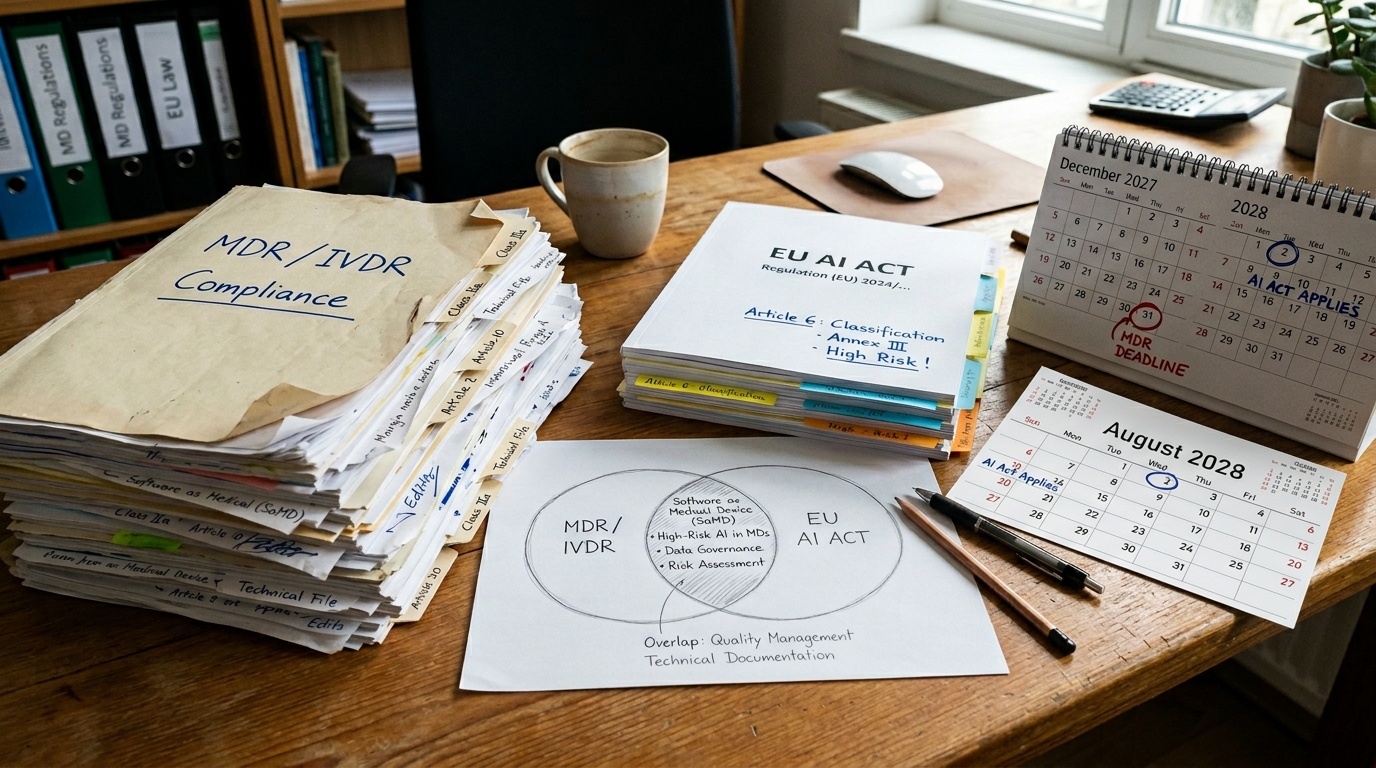

The AI Act Omnibus contains a provision on sectoral overlap. Where AI systems are built into products already regulated under sector-specific EU law — medical devices, in vitro diagnostics, radio equipment, toy safety — the AI Act’s obligations may be reduced. The applicable deadline for this category is 2 August 2028, not December 2027. And in some cases, the compliance burden is explicitly lighter, since the sector law is judged to already provide equivalent or stronger safeguards.

On the surface, this looks like a weakening of the paper’s argument for healthcare. The paper projected healthcare at the upper bound of local AI adoption: 90% by 2028–2030. The fourth structural force was supposed to be accelerating that shift. Has the Omnibus taken the foot off the pedal?

No. But the reason matters.

What the Sectoral Overlap Actually Does

The sectoral overlap provision is targeted. It applies to AI embedded in products regulated under the Medical Devices Regulation (MDR) or the In Vitro Diagnostics Regulation (IVDR). These are diagnostic software systems, AI-assisted imaging tools, clinical decision support embedded in regulated medical devices.

For these systems, where the MDR or IVDR already requires clinical validation, post-market surveillance, and quality management systems, the AI Act does not impose a duplicate layer on top. The compliance burden is rationalised, not eliminated. The AI Act’s core requirements — transparency to patients, bias documentation, human oversight — still apply. What is reduced is the duplication of documentation across two regulatory frameworks that were targeting the same risk from different angles.

This is good law. Duplicate compliance overhead does not make patients safer. It makes compliance teams slower.

But notice what is not in the sectoral overlap: general clinical AI that is not embedded in a regulated medical device. A GP’s AI-assisted diagnostic support. A hospital’s patient triage system. An AI that reads GP notes and surfaces pattern alerts. A mental health platform using natural language to flag risk. These are not MDR products. They face the full AI Act timeline. December 2027. In full.

The Argument the Omnibus Cannot Touch

The paper projected healthcare as the sector with the highest local AI adoption rate for a reason that has nothing to do with the AI Act.

It has to do with GDPR Article 9.

Health data is special category data under GDPR. It requires explicit legal basis for processing, specific security measures, and — for cross-border transfers outside the European Economic Area — a valid transfer mechanism that, post-Schrems II, is genuinely difficult to construct. When an organisation processes patient health data through a US cloud API, it is not facing a potential fine in 2027. It is potentially in breach today.

The AI Act Omnibus does not amend GDPR. It cannot. GDPR is a separate regulation with its own enforcement machinery, its own supervisory authorities, and its own fines — up to 4% of global annual turnover for violations of the core principles, including Article 9 transfers.

A hospital that softens its AI Act compliance timeline because the Omnibus says so does not thereby reduce its GDPR exposure. The patient whose imaging data was sent to an AWS inference endpoint in Virginia has a GDPR complaint whether or not the AI Act audit is due in December 2027 or August 2028.

NIS2 and the Critical Infrastructure Layer

Healthcare was named as critical infrastructure under the NIS2 Directive, which entered application in October 2024. NIS2 requires entities in the healthcare sector to implement appropriate cybersecurity risk management measures, including measures covering the supply chain — which means the AI supply chain.

An AI system that runs on a foreign cloud provider sits in the healthcare organisation’s supply chain. NIS2 requires that supply chain to be assessed, documented, and secured. When the supply chain includes a US hyperscaler subject to CLOUD Act compelled access, the NIS2 assessment must account for that risk. There is no NIS2 exception for AI tools that are otherwise helpful and well-marketed.

The Omnibus softens AI Act compliance for embedded medical devices. NIS2 does not have a medical device exception. It applies to the healthcare entity as a whole, including all the AI it uses.

The Patient Trust Argument

The structural forces in the paper are geopolitical, environmental, hardware-based, and regulatory. There is a fifth force the paper treats as secondary but that is gaining weight: what patients expect.

Healthcare in Europe is publicly funded or heavily regulated in most member states. The public relationship to health data is different from the relationship to e-commerce data or social media data. People have a broadly shared intuition that their GP records, their mental health history, their genetic markers, and their diagnostic imaging should not be processed by a commercial AI company whose primary obligation is to its shareholders in California.

That intuition does not require legal education. It does not require reading the CLOUD Act or GDPR Article 9. It is a straightforward social norm: my health data stays here.

Healthcare providers that deploy cloud-based AI in clinical settings face this norm in patient consent flows, in media coverage when something goes wrong, and in the political environment where health procurement decisions are scrutinized. The AI Act Omnibus does not change any of this. It adjusts a compliance deadline for a specific subset of medical devices. It does not relax the expectation that patient data remains under European control.

What the Omnibus Actually Changes for Healthcare

The honest assessment is narrow but real.

For manufacturers of regulated medical AI — imaging AI, surgical robotics software, in vitro diagnostic systems — the Omnibus provides a cleaner compliance path and a later deadline. This reduces the acute pressure on a specific type of organisation in a specific compliance window. It is a proportionate measure, because these organisations already operate under the MDR’s rigorous clinical validation framework.

For the broader healthcare AI ecosystem — the majority of clinical AI deployments that are not embedded in regulated devices — nothing has changed. December 2027 applies. GDPR applies now. NIS2 applies now. The patient trust argument applies always.

The organisations that will move to local AI fastest in healthcare are not the ones responding to the AI Act compliance clock. They are the ones that understand why patient data cannot be on foreign infrastructure regardless of what the AI Act says. The Omnibus may slow the compliance-motivated organisations. It does not slow the sovereignty-motivated ones.

The paper’s upper bound for healthcare — 90% local inference by 2028–2030 — was always based on the combination of forces, not on AI Act compliance alone. That combination is unchanged.

A Counterintuitive Implication

There is an argument that the Omnibus, by reducing compliance pressure on the slowest actors, actually improves the quality of the healthcare local AI ecosystem.

The organisations that move because of a compliance deadline are organisations that would be moving anyway, at the last possible moment, in the minimum compliant configuration. Their motivation is avoidance, not architecture. These organisations will build local AI deployments that satisfy the letter of the requirement and nothing more.

The organisations that move because they genuinely understand that patient data belongs on European infrastructure will build the right architecture. They will think about data provenance, audit trails, local model governance, and long-term sovereignty — not because a deadline forced them, but because they understand what they are protecting.

By softening the AI Act deadline for a subset of medical devices, the Omnibus may thin out the first wave of compliance-driven rush deployments. What remains is the healthcare sector’s most thoughtful movers. That is not a worse outcome. It may be a better one.

The Prediction Stands

The paper identified healthcare as the sector facing the strongest convergence of all four forces: a geopolitical case for keeping patient data on European soil, an environmental case for energy-efficient edge inference in distributed clinical settings, hardware mature enough to run diagnostic AI on a ward-level NPU node, and a regulatory environment that makes local deployment the path of least resistance.

The Omnibus has adjusted one element of the fourth force, for one subset of the sector, by one year.

The other three forces have not been adjusted. GDPR has not been amended. NIS2 has not been softened. The hardware is arriving on its own timeline. The patient trust argument is growing, not shrinking.

The 90% projection stands.