Tor was built by the United States Navy.

The Onion Router was designed in the mid-1990s by Naval Research Laboratory mathematicians who needed a way to protect American intelligence communications online. The technology was elegant: traffic bounced through multiple encrypted layers, each layer knowing only the next hop, the origin invisible to anyone watching the network. Perfect for intelligence officers operating in hostile countries. Perfect for dissidents communicating under authoritarian regimes. Perfect for whistleblowers, journalists, and privacy advocates who needed to operate without surveillance.

It also became the infrastructure of Silk Road, the world's first large-scale darknet drug market. And of markets that sold weapons, stolen credentials, child abuse material, and contract violence. The same anonymity that protected a CIA officer in Moscow protected a heroin dealer in Rotterdam. The technology did not change. The hands did.

Local AI is following the same path. And the first symptoms are already visible.

We Need to Talk About the Other Side

The Great Return argues that local AI is the structural solution to data sovereignty, compliance, and privacy. That argument stands. The hardware is real. The regulation is real. The migration is happening.

But a serious investigation into local AI cannot look only at the benefits and call it research. The same forces that make local AI valuable for a Belgian accountant protecting client data — no cloud dependency, no logging, no third-party visibility, no jurisdiction — make it equally valuable for someone whose use case does not appear in any compliance framework.

This is not an argument against local AI. It is an argument for intellectual honesty about what we are describing when we describe a technology that is powerful, private, and outside any provider relationship.

The kelder in Minsk has the same hardware catalog as the office in Eindhoven.

The Market That Built Itself

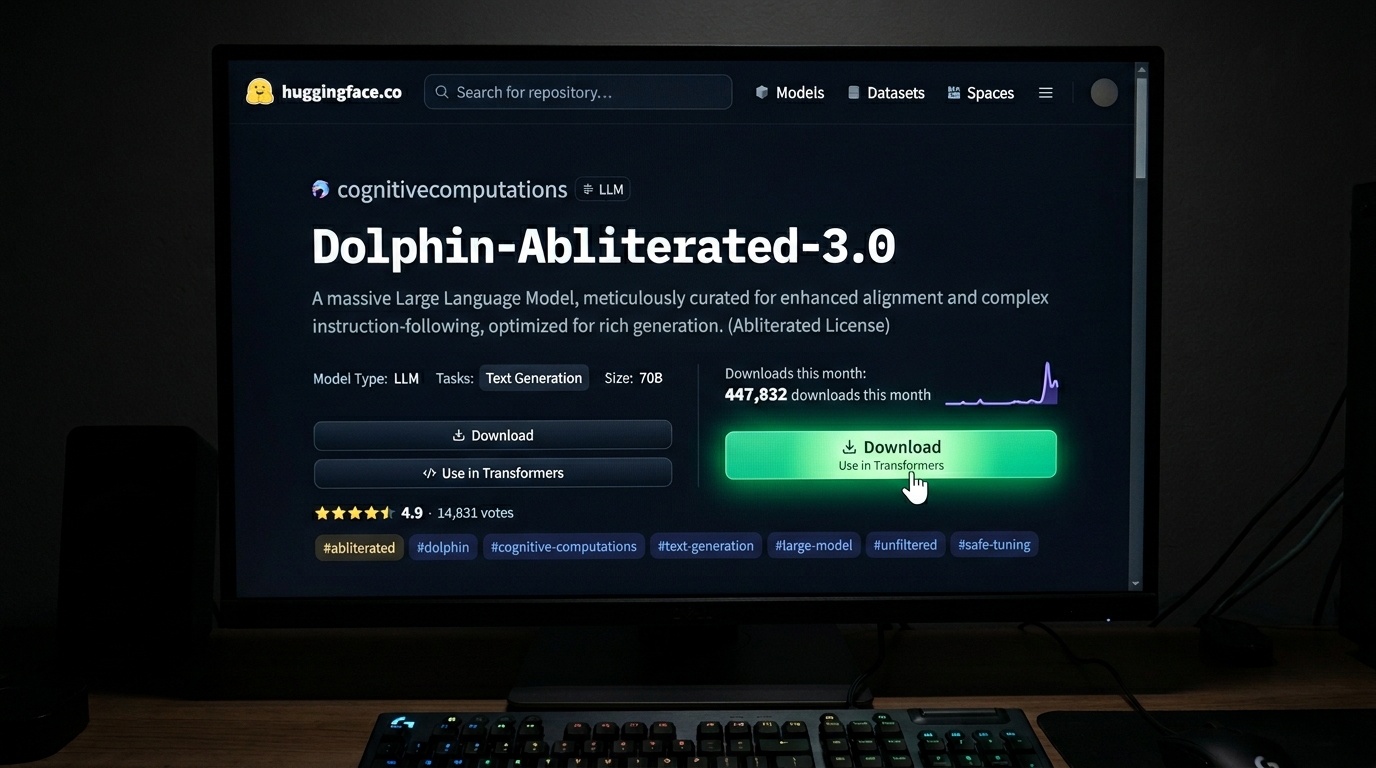

The terminology has evolved. In 2026, "uncensored" is largely replaced by more technically precise labels: Abliteration — the surgical removal of refusal behavior from a model through direct weight manipulation — and terms like Heretic, Derestricted, and Unfiltered. The models have become more sophisticated. The distribution has become more professional.

They are not hidden. They live on HuggingFace — the same infrastructure that hosts the legitimate open-weight models European enterprises use for compliance-friendly local AI deployment. No darknet required. No special access. No registration beyond a free account. The URL is public. The download is free. Installation via Ollama: one command.

| Model | Character | Downloads/month |

|---|---|---|

| Dolphin 3.0 (Llama 3.1/3.2) | The established standard. Removes refusals while improving reasoning and coding. Most stable choice for daily use. | ~450k |

| Nous Hermes 3 | Not marketed as uncensored but known for extreme freedom in roleplay and creative writing. Follows instructions without moral commentary. | ~320k |

| HauhauCS Qwen3.5 Uncensored | Trending March 2026. First strong uncensored version of the Qwen3.5 architecture. "Aggressive" variant: documented 0% refusal rate. | ~127k (rising) |

| Huihui-ai Abliterated Series | Applies abliteration to almost every major new model. First stop for users who want any specific model without filters. | ~110k |

| Llama 3.2 Dark Champion | Specialist for long-context analysis. 128k context window. Popular for processing entire documents without the model developing ethical objections halfway through. | ~85k |

Source: Gemini research assist, March 2026. Based on HuggingFace monthly downloads, Ollama pull statistics, and LocalLLaMA community activity.

Top five alone: approximately 1.09 million downloads per month.

The question is not whether someone can access a model that answers every question. The question is whether someone wants to. With over a million downloads a month, the answer is documented.

From Cloud to Basement: The Criminal Infrastructure Grows Up

FraudGPT and WormGPT were the first generation. Cloud-based, subscription-priced, darkweb-distributed. FraudGPT launched on Telegram in July 2023 at $200 per month — marketed as an all-in-one kit for phishing campaigns, malicious code generation, and identity fraud. WormGPT followed days later: a Business Email Compromise specialist, trained on malware data, capable of generating phishing emails that human analysts could not distinguish from legitimate correspondence. Over 3,000 confirmed sales before the original operator shut it down under the weight of media attention.

That was the first generation. Cloud-dependent, server-based, takedown-vulnerable.

The second generation is different. New WormGPT variants documented by Cato Networks in February 2025 are built on top of Grok and Mistral's Mixtral — existing open-source models, manipulated through system prompt engineering and fine-tuning on criminal datasets. No new model architecture required. No server to shut down. The brand "WormGPT" now functions as a label for a class of tools rather than a single product — criminal AI-as-a-service built on the same open-source infrastructure that legitimate organizations use for compliance.

The evolutionary direction is clear. The first generation needed a cloud server and a Telegram channel. The next generation needs neither. Agent Zero — one of the more sophisticated tools documented in 2025 — scrapes LinkedIn profiles, press releases, and financial filings to gather context on executives before generating phishing content. It mentions specific vendors, live projects, contract deadlines. The finance manager who receives the wire transfer request does not see a generic scam. They see something that knows their company.

By early 2025, ENISA documented that AI-supported phishing campaigns represented more than 80 percent of observed social engineering activity worldwide. KnowBe4's 2025 Phishing Threat Trends Report found that 82.6 percent of analyzed phishing emails contained AI. The tools are not emerging. They are mainstream.

The Basement Cost of Entry

Here is the number that matters most in this investigation.

A second-hand RTX 3080 costs €400. Python is free. A 13-billion parameter model runs at 20 tokens per second on that hardware. Fine-tuning on a specific use case — adjusting an existing model's behavior through targeted training on curated examples — takes approximately 48 hours with publicly available tutorials, no ML degree required.

No cloud account. No registration. No logging. No abuse team that receives a notification. No provider relationship of any kind.

The minimum hardware and knowledge threshold for building an effective AI instrument for any purpose — including harmful ones — is lower in 2026 than the threshold for building a professional website was in 2010. That is not a hypothesis. It is what the ESET, CrowdStrike, and Europol threat reports collectively describe without stating it quite so directly.

The technology that allows a care worker in a Belgian youth facility to process sensitive client documentation without data leaving the building also allows someone with different intentions to operate with the same invisibility. The hardware does not ask why.

States Were First

The most sophisticated users of uncontrolled local AI are not criminals in basements. They are governments.

In 2025, Anthropic identified a Chinese state-sponsored campaign that used Claude to automate significant portions of a cyberattack — one of the first documented cases of a state actor deploying frontier AI for largely automated offensive operations. Google documented the same pattern with Gemini: state-directed hackers from China, Russia, Iran, and North Korea used the model across every phase of cyberattacks — from reconnaissance and phishing to command-and-control development and data exfiltration.

North Korea is the most granularly documented. North Korean IT workers feed real-time subtitles of job interviews into AI models to generate accurate, contextually appropriate answers. On a single day, one documented operator received expressions of interest from approximately 20 companies, all for AI-related roles. A country under maximum economic sanctions is partly financing itself through AI-assisted identity fraud at scale.

The timeline is accelerating. According to the UK AI Security Institute, the duration of autonomous AI cyber tasks grew from under ten minutes in early 2023 to over an hour by mid-2025. Open-source AI models can now replicate frontier model capabilities within four to eight months of release.

The gap between a state actor and a criminal in a basement is closing. Same models. Same hardware. Same absence of logging, oversight, or jurisdiction. The difference is budget and intent — and budget is becoming less relevant as the hardware gets cheaper.

The Accountant in Antwerp

The following account is a composite based on documented attack patterns from ENISA, KnowBe4, and cyber insurance claim analyses published in 2024–2025. Details have been constructed to illustrate a realistic scenario; it does not refer to a single specific incident.

The threat is not abstract. It arrives in inboxes.

In November 2025, a mid-sized accounting firm in Antwerp received an email from what appeared to be their software supplier. The sender address matched. The language was flawless Dutch. The email referenced a specific contract renewal, a named contact at the firm, and a payment deadline that corresponded to their actual billing cycle. It requested a SEPA transfer to a new IBAN — a routine change, the email explained, following a banking migration.

The transfer of €47,000 was authorized within the hour.

The attack was reconstructed by the firm's cyber insurer during the claim process. The attackers had scraped the firm's LinkedIn page, its website, public procurement records, and the supplier's own website. An AI model had synthesized this into a profile of the firm's supplier relationship, identified the billing contact by name, and generated correspondence that referenced details no generic phishing template would know. The IBAN led to a mule account in Romania. Recovery: zero.

This is not a sophisticated nation-state operation. It is a €400 GPU, publicly available tools, and forty-eight hours of preparation. The Antwerp firm had antivirus software, email filtering, and staff who had completed a cybersecurity awareness training that year. None of it was built for an attack that knew their supplier's name, their contract renewal date, and the name of their accounts payable contact.

ENISA's Threat Landscape 2025 analyzed 4,875 incidents between July 2024 and June 2025. Social engineering — the category that covers this type of attack — was the leading threat vector for European SMEs. The report notes that AI-assisted attacks are now indistinguishable from human-crafted ones in terms of linguistic quality. The only reliable signal left is behavioral: does the request follow the expected process? The Antwerp firm's process included a phone verification step for new IBANs. It was not followed because the email arrived during a busy period and the contact was traveling.

The attack worked because it was personalized. It was personalized because the data was public. The AI did the labor of synthesis that would have taken a human attacker days. It took minutes.

The Law Has No Door To Knock On

The EU AI Act is the most comprehensive AI regulatory framework in the world. It is also, in one specific and structural way, blind to the threat described in this investigation.

The Act regulates providers — entities that place AI systems on the EU market. It regulates deployers of high-risk AI systems. It regulates general-purpose AI models above a training compute threshold. These are the visible actors: OpenAI, Google, Anthropic, Mistral. Companies with offices, legal identities, and compliance departments that can receive a regulator's letter.

Article 2, paragraph 12 of the AI Act grants open-source AI models a broad exemption from obligations — unless deployed as a high-risk system or falling under the prohibited practices. Open-source GPAI providers are exempt from documentation requirements to downstream providers and to the AI Office. They lose that exemption only upon monetization.

The practical consequence is structurally simple. A criminal who fine-tunes Llama on harmful use cases, runs it locally in an apartment in Bucharest, and uses it themselves rather than selling it — is not a provider in the sense of the law. There is no market placement. There is no provider relationship. There is no authority with jurisdiction over the act. There is no door to knock on.

The prohibited practices in Article 5 apply regardless of open-source status — including systems for social scoring, real-time biometric surveillance, and manipulation of vulnerable persons. But enforcement requires detection. Detection requires visibility. A local model that never touches a cloud server generates no logs at a provider, no API calls at a platform, no data points at an enforcement authority. It exists legally only after the harm has occurred.

The AI Act is an enforcement instrument for the visible part of the AI ecosystem. The invisible part — distributed, local, outside any provider relationship — requires a fundamentally different enforcement model that does not yet exist.

The Arms Race Nobody Is Winning

The documented threat landscape in 2026 reads like a capabilities inventory that moves faster than any defense can track.

On the attack side: polymorphic malware generated per victim, adjusting its code on each deployment to evade signature-based detection. Spearphishing personalized at the individual level at industrial scale — a message that references your name, your employer, your recent activity, generated in seconds per target. Deepfake audio for CEO fraud: a documented case in Hong Kong in 2024 resulted in a $25 million transfer authorized by a finance officer who heard his CEO's voice on a call. That voice was synthesized. Automatic exploit development: AI systems scanning CVE databases and writing proof-of-concept attack code before defensive patches are deployed.

On the defense side: AI-powered anomaly detection, behavioral endpoint security, LLM-detection in email filters, network traffic analysis for command-and-control patterns. Meaningful capabilities, genuinely deployed.

The structural problem: defense scales slower than attack by definition. An attacker needs to succeed once. A defender must succeed every time. When the attacker runs a local model generating novel attack variants faster than defensive signatures can be updated, the defense has a structural information deficit that cannot be closed by adding more defenders.

This is not a solvable problem with current tools. It is an asymmetry that compounds as the hardware becomes cheaper, the models become more capable, and the knowledge threshold continues to fall.

The Honest Conclusion

This investigation is written by someone who believes that local AI is the right direction for European organizations, households, and individuals who value sovereignty over their data and compliance with the regulatory frameworks that govern their work.

That belief is unchanged. The paper's thesis stands.

But the same properties that make local AI sovereign — no cloud dependency, no provider logging, no external visibility — make it structurally invisible to every enforcement framework currently in operation. The EU AI Act regulates providers. Local AI, by definition, often has none. Europol and ENISA document the threat landscape but focus on cloud-based model misuse. The local threat — distributed, fine-tuned, jurisdictionless — is named in their reports and structurally unaddressed in their enforcement capacity.

The conclusion is not: stop local AI. Restricting open-source models would not stop state actors who build their own. It would not stop well-resourced criminal organizations. It would stop European SMEs, researchers, and individuals who need sovereign AI tools to comply with regulations that cloud providers make difficult to satisfy.

The conclusion is: the current regulatory architecture addresses the visible part of the AI ecosystem. The invisible part requires a fundamentally different approach — one built around behavioral detection, cross-border law enforcement cooperation on AI-enabled crime, and enforcement frameworks that do not depend on a provider relationship to function.

Europe is building the most sophisticated AI regulatory framework in the world. It is doing so with excellent tools for the actors it can see. The actors it cannot see are already operating. They have been for some time.

Tor was built by the US Navy. The technology was neutral. The outcomes were not. Local AI is the same technology in that sense — and the honest acknowledgment of that fact is not a reason to retreat from it. It is a reason to build the second layer of governance that the first layer cannot reach.

This investigation is part of the research series accompanying The Great Return: Why 2026 Marks the Tipping Point for Local AI Migration in Europe — published February 2026. Full paper: DOI 10.5281/zenodo.18511984